The Receipt Nobody Sees

A neon-perfect “cinematic” clip takes 20 seconds to generate. It looks weightless. That’s the illusion. The real receipt prints somewhere else: in a data hall, in a cooling loop, on a grid operator’s load forecast, and eventually in someone’s permitting meeting.

Energy-and-water scrutiny for AI data centers is no longer niche—it’s showing up in mainstream infrastructure planning and policy conversations, not just sustainability blogs. And the reason is simple: image and video generation are “fun” workloads that scale fast because they feel cheap at the point of use.

If you only measure the footprint at the moment a user clicks “Generate,” you’ll miss most of the story. The footprint is distributed. It’s also uneven: the same prompt can land on different hardware, in different regions, under different cooling designs, with very different marginal impacts.

The U.S. Department of Energy frames data centers as a major efficiency and infrastructure focus area, which matters because AI media generation is increasingly a first-class tenant inside those buildings.

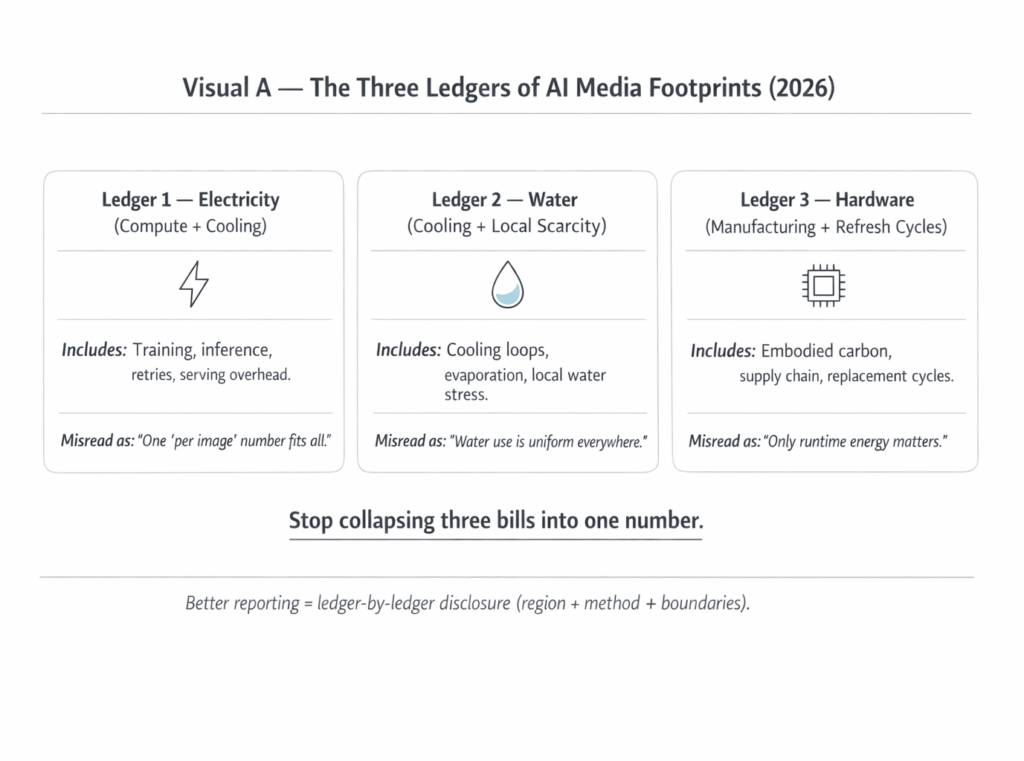

Here’s the clean way to think about it: environmental impact is not one number. It’s multiple ledgers. And AI images/video touch all of them.

The Footprint Has Three Ledgers (Not One)

Ledger 1: Electricity (Compute + Cooling)

Electricity is the obvious ledger, but “electricity” here really means a bundle: compute cycles, memory bandwidth, storage, networking, and the overhead of keeping servers inside safe thermal ranges. The part that people miss: inference is not always “small” compared to training when the product is high-volume generation. Training is the headline; serving is the habit.

Video generation is heavier than single-image generation for boring reasons that still matter: more frames, more sampling steps, more opportunities to retry, and more time keeping GPUs occupied. Even when models get more efficient, usage patterns often expand to fill the slack.

The IEA’s analysis of AI-driven power demand is useful here because it treats AI as an electricity-demand problem, not just a “software innovation” story.

One more detail that’s easy to ignore: where the workload runs matters. A generation job running on a grid with a higher-carbon marginal mix is not equivalent to the same job running where marginal generation is cleaner. That doesn’t mean you can eyeball the footprint from a country-level chart. It means location-aware accounting is hard—and marketing tends to be simpler than reality.

IEA’s broader tracking on data centers and networks helps contextualize how demand scales and why marginal electricity matters.

Ledger 2: Water (Cooling + Local Scarcity)

Water is the ledger that triggers local politics fastest. Not because every data center is “draining a river,” but because water impact is regional and seasonal. A facility that is “fine” in one basin becomes contentious in another, especially during drought restrictions and competing municipal needs.

Water use in data centers is closely tied to cooling design—air cooling, chilled-water systems, evaporative approaches, or hybrids—each with different tradeoffs between electricity, water, capital cost, and performance. A useful starting point is to understand how operators define and measure water intensity.

If you want a metric vocabulary: Water Usage Effectiveness (WUE) is commonly used to discuss water intensity in data centers, even though reporting practices and boundaries vary across operators. That “boundary problem” (what’s included, what’s excluded) is exactly where PR-friendly claims can hide real impact.

This is also where the broader infrastructure context matters. Cooling water isn’t just “a sustainability issue.” It becomes a siting constraint. If a region is already stressed, scaling AI media workloads can collide with local water governance faster than anyone expects.

Why AI infrastructure depends on water

Ledger 3: Hardware (Manufacturing + Refresh Cycles)

Electricity and water are the operating ledgers. Hardware is the embodied ledger. GPUs, servers, racks, networking gear, and storage are manufactured, transported, and replaced on refresh cycles that are driven by performance jumps and competitive pressure. That embodied impact doesn’t show up in a “per prompt” number, but it’s real—and it accumulates when fleets scale.

Lifecycle accounting is the right lens for hardware impact because it forces a boundary: manufacturing, use-phase, and end-of-life. If a company only talks about operational energy and ignores embodied impacts, you’re missing a chunk of the footprint.

E-waste is the downstream shadow of refresh cycles. It’s not uniquely “AI-caused,” but AI-driven buildouts accelerate demand for high-end components, and turnover pressure increases when each new generation shifts the cost/performance curve.

The Global E-waste Monitor is the most-cited baseline for understanding scale and trends in electronic waste globally.

Why “Per Image” Numbers Mislead

“Per image” footprint claims are popular because they’re legible. They’re also easy to misuse. The marginal cost of an output depends on model choice, resolution, sampling steps, safety filters, moderation reruns, and retries. A user who generates 30 variations for a single post is not the same as a user who generates one draft, edits, and stops.

The IEA’s framing of AI energy demand is a good reminder that usage behavior is a first-order variable, not an afterthought.

Then there’s infrastructure sharing. In large-scale serving, the marginal energy per output can drop when utilization is high (fewer idle resources). But the opposite can also happen: peak-time demand can trigger less efficient operation, higher cooling overhead, or a shift toward more carbon-intensive marginal electricity. “Average” numbers flatten those differences.

Data center energy overhead is commonly discussed via PUE-like concepts, which is useful—until it becomes a slogan instead of a measurement with context.

The most honest statement you can make about “per image” impact is: it varies. If someone presents a single fixed number without explaining boundaries, region, and workload assumptions, treat it as a marketing approximation, not a scientific claim.

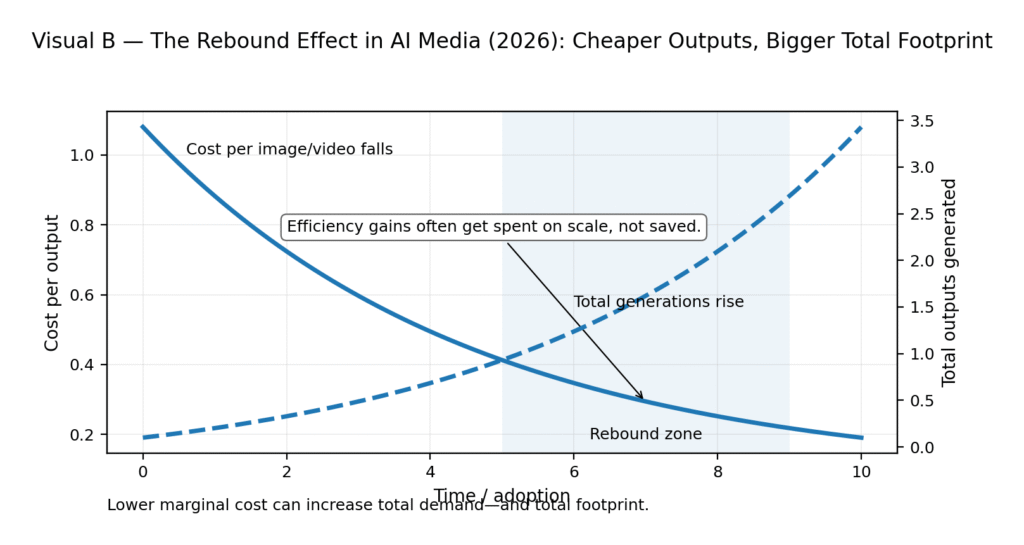

The Rebound Effect: When Efficiency Increases Total Consumption

Efficiency improvements are real. They also don’t guarantee lower total impact. If generating an image gets cheaper and faster, people generate more images. If video generation gets smoother, people experiment more, iterate more, and ship more content. The total number of outputs can rise faster than efficiency reduces per-output cost. That’s the rebound effect in plain terms.

The rebound effect is a well-documented phenomenon in energy economics: efficiency can reduce unit cost while increasing total consumption. The same dynamic applies to digital services when friction drops and usage expands.

This is why “my model is more efficient” is not the same as “my product reduces environmental impact.” A more efficient model can still drive a larger footprint if it massively expands use. The right question is not just “How efficient is the model?” It’s “What happens to total demand when this becomes easier?”

The “Clean AI” Claims: What’s Solid vs What’s Marketing

Tier 1: Claims That Can Be Verified

These are claims anchored in disclosure, boundaries, and auditability. Examples include: reporting the grid region where workloads run, publishing methodology, separating operational energy from embodied impacts, and providing third-party verification where possible.

The GHG Protocol Product Standard is a reference point for what “counting” looks like when you’re serious about lifecycle boundaries.

Some organizations do publish credible sustainability disclosures, including energy procurement detail and facility-level reporting. Those disclosures can still be incomplete, but at least you can interrogate methodology.

Google’s Environmental Report is a useful example of public reporting scope and method (even if you should still read it skeptically).

Tier 2: Claims That Are Directionally True but Easy to Abuse

This is where you’ll see phrases like “powered by renewable energy” without time-matching, location-matching, or workload-specific reporting. It can be directionally good—cleaner procurement can reduce impact—but it’s also a common zone for vague language that masks hard questions about marginal electricity and peak load.

IEA’s work on data centers and AI energy demand provides context for why time/location matching matters when demand rises.

Tier 3: Claims That Should Trigger Skepticism

If there’s no methodology, no boundaries, no region information, and no disclosure about cooling design or water intensity, it’s not a footprint claim—it’s branding. “Carbon neutral” claims without transparent accounting often rely heavily on offsets, and offsets debates are deep and messy. If a company isn’t willing to show its math, you’re not supposed to verify it. You’re supposed to trust it.

The IEA’s Energy and AI report is blunt about the scale of demand and the need for credible measurement, which makes “trust me” claims feel especially thin.

The User’s Role: Small Habits That Actually Move the Needle

I’ve watched teams burn hours generating “one more variation” because it’s fun and instant—then ship a single asset anyway. That loop is where waste quietly accumulates. You can’t fix infrastructure siting from your laptop, but you can reduce pointless generation.

Low-effort ways to reduce AI media waste

- Draft at lower resolution first, upscale only when you’re committed to the concept.

- Reduce retries: plan the shot list, then generate with fewer “random spins.”

- Reuse prompts and seeds when iterating; don’t restart from scratch every time.

- Generate fewer variations by doing a rough storyboard first (even text-only).

- Batch your workflow: decide criteria, then generate once with clear constraints.

- Prefer providers that publish transparent environmental reporting and methodology (GHG Protocol Product Standard is a good baseline for what “methodology” means).

- If you’re producing video, limit frame length during exploration; expand only after approvals.

Policy Catch-Up: What Regulators Are Starting to Ask For

Policy is drifting toward measurement and disclosure, because capacity expansion touches public infrastructure: grids, water, land, and permitting. In the EU, energy policy has moved toward stronger efficiency and reporting expectations in multiple sectors, and data centers are increasingly part of that conversation.

The EU’s Energy Efficiency Directive is a key reference point for the direction of travel on efficiency and reporting frameworks.

In the U.S., federal and state attention is uneven, but the broad arc is similar: more interest in reporting, efficiency, and infrastructure planning as data center load grows. The IEA’s analysis is helpful again because it frames AI demand as a grid planning issue, not just “tech growth.”

This is also where readers should connect the dots between “fun media generation” and the physical system that carries it.

The physical world behind digital AI

The Practical Standard Readers Can Reuse

Minimum Disclosure Standard — AI Image/Video Tools

Boundary: training vs inference vs serving (state what’s counted)

Region: where workloads run (grid region, not just “country”)

Energy: method for estimating kWh and time/window assumptions

Water: cooling type + how water intensity is measured (WUE or equivalent)

Hardware: refresh cycle assumptions and embodied accounting approach

Verification: whether methods are audited or reproducible

User controls: knobs that meaningfully reduce compute (resolution/steps/length)

Change log: model versioning + deployment changes that affect footprint

By Sami Hayes – AIchronicle Insights