The Trigger Event

In December 2023, U.S. federal courts implemented a major amendment to the rule that governs expert testimony: Federal Rule of Evidence 702. The change wasn’t about “AI” by name. It was about something courts keep getting wrong: letting shaky expert methods slide because the fight sounds technical and time is short. The rule text and notes are public, and they’re blunt about the judge’s gatekeeping role. https://www.law.cornell.edu/rules/fre/rule_702

Now put that against what’s happening in evidence rooms in 2026: models that classify, match, rank, infer, and “enhance” are appearing in investigative workflows, vendor products, and litigation support. Some are excellent. Some are basically marketing with math.

Courts aren’t just deciding whether AI can be used. They’re deciding what counts as reliable knowledge when a black box is part of the chain.

And that chain matters. Authentication still rules the day. The U.S. baseline standard for authenticating evidence is still Rule 901 (“produce evidence sufficient to support a finding that the item is what the proponent claims it is”). https://www.law.cornell.edu/rules/fre/rule_901

AI doesn’t replace those standards. It stress-tests them.

What People Get Wrong About This Topic

Wrong assumption #1: “If the model is accurate in the lab, it’s accurate in court.” → Reality check: Courts deal with messy inputs: low-light video, compression artifacts, partial prints, noisy audio, missing metadata. Lab benchmarks often don’t match courtroom conditions, and even strong systems can fail under distribution shift. NIST’s Face Recognition Vendor Test (FRVT) program is useful precisely because it measures performance across conditions and demographics, and it shows why “accuracy” is not a single number you can carry into every context. https://www.nist.gov/programs-projects/face-recognition-vendor-test-frvt

Wrong assumption #2: “AI evidence is neutral—machines don’t lie.” → Reality check: A model can be non-human and still be biased through training data, design choices, thresholds, and operator behavior. NIST’s demographic effects work in face recognition is a concrete example of how error rates can vary across groups and conditions. https://www.nist.gov/publications/face-recognition-vendor-test-part-3-demographic-effects

Wrong assumption #3: “If an expert explains it, the jury is safe.” → Reality check: Explanation quality is not the same thing as method validity. Rule 702 requires that the proponent show the expert’s opinion is based on sufficient facts/data and reliable principles/methods, and that the expert reliably applied them. “Trust me, I’m an expert” is exactly what the rule is trying to prevent. https://www.law.cornell.edu/rules/fre/rule_702

Wrong assumption #4: “Deepfakes are the only AI-evidence risk.” → Reality check: Synthetic media is real, but the quieter danger is “AI laundering”: a weak inference gets wrapped in confident language, a vendor report, and an expert’s signature, then it enters the record looking official. The court’s job becomes less about truth and more about whether anyone can afford to challenge the pipeline. The NIST AI Risk Management Framework is a good anchor here because it treats AI risk as an ongoing lifecycle problem—governance, measurement, monitoring—not a one-time accuracy claim. https://www.nist.gov/itl/ai-risk-management-framework

The Mechanism Map (How it actually works)

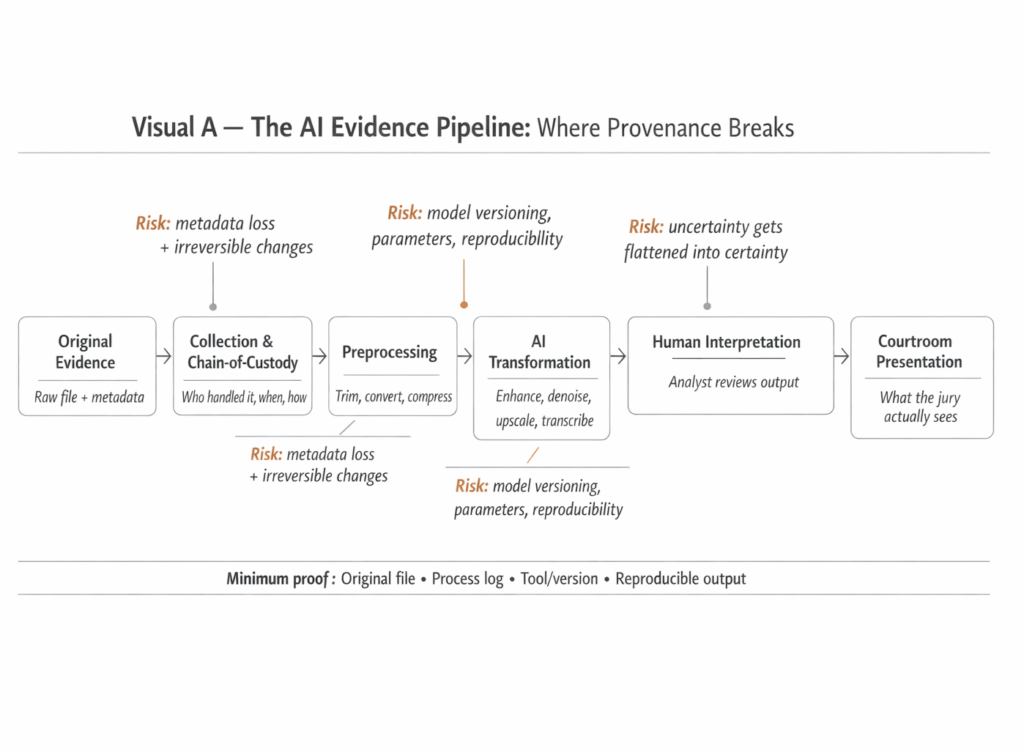

Moving Part 1: Authentication and provenance

What it is : The court needs a defensible story for what the evidence is, where it came from, and whether it was altered. In the U.S., the core baseline is Rule 901 authentication. https://www.law.cornell.edu/rules/fre/rule_901

What fails in real environments: AI tools often enter midstream: “enhancement” of video, de-noising audio, upscaling, reconstruction, transcription. Each step can change the artifact in ways a layperson can’t detect. Without a tight chain-of-custody and repeatable process logs, the argument becomes vibes: “it looks clearer, so it’s better.”

What signals it’s failing: Missing original files, missing metadata, unclear operator steps, no versioning, no record of parameters, no ability for the other side to replicate outputs. Courts also care about “best evidence” concepts and whether the offered item is a faithful representation—if the original exists and you don’t produce it, expect trouble. (See generally Rule 1002 “Best Evidence Rule.”) https://www.law.cornell.edu/rules/fre/rule_1002

Moving Part 2: Expert gatekeeping and method validity

What it is: Expert testimony is allowed only if it meets reliability requirements under Rule 702. Judges are supposed to act as gatekeepers, not passive referees. https://www.law.cornell.edu/rules/fre/rule_702

What fails in real environments: Courts can get trapped between two bad options: accept the expert because the tech is “too advanced to question,” or exclude useful evidence because nobody can explain it cleanly. The Daubert line of cases is the reason Rule 702 reads the way it does; it pushed judges to ask about testing, error rates, standards, and general acceptance—then to actually enforce those questions. (Cornell’s Rule 702 notes summarize that history directly.) https://www.law.cornell.edu/rules/fre/rule_702

What signals it’s failing: “Black box” claims without documented testing, no disclosed error rates in relevant conditions, no clear thresholds, no peer-reviewed validation, and no clarity on how human judgment enters the result. If the method can’t be audited, the court is being asked to accept authority, not evidence.

Moving Part 3: Statistical framing and the “certainty voice”

What it is: Many AI-derived claims are probabilistic (match scores, likelihood ratios, confidence bands), but they get translated into categorical courtroom language (“match,” “identified,” “this is the person”).

What fails in real environments: A probability can be technically correct and still mislead if the reference class is wrong, the base rate is ignored, or the model is evaluated on a different population than the case. Forensic science has been warning about overstated certainty for years; the 2009 National Research Council report is still the clearest institutional critique of weak validation and inconsistent standards across forensic methods. https://nap.nationalacademies.org/catalog/12589/strengthening-forensic-science-in-the-united-states-a-path-forward

What signals it’s failing: Reports that hide uncertainty, experts who can’t articulate error rates, and methods that have not been independently validated. When a claim is “high confidence” but nobody can show how confidence was calibrated, it’s branding.

The Incentive Layer (Follow the human motives)

Here’s the part many articles dodge: the courtroom isn’t a lab. It’s an adversarial system with budgets, deadlines, and reputations.

Prosecutors want tools that scale. Defense teams want time and access to challenge methods. Vendors want adoption, and adoption favors simplicity: a clean output, a clean “match,” a clean PDF. Labs want throughput and fewer backlog headlines. Judges want hearings that don’t turn into multi-day tutorials.

So what happens? AI becomes a pressure release valve. The tool “clears” cases faster. The report looks professional. The human signing the report gets shielded by complexity. If the output is wrong, blame can diffuse across software, lab procedure, and expert interpretation.

This is why governance is not optional. If you don’t know who audits the model, you don’t know what the model is worth when liberty is on the line. That’s the same accountability gap I’ve written about in Who Audits the Algorithms?

And it’s why synthetic media isn’t a side issue anymore. When the cost of generating persuasive artifacts drops, the legal system’s verification burden rises. That risk rhymes with The End of Reality—not because “nothing is real,” but because verifying what’s real becomes expensive.

“If This Is True, Then We Should See…”

If AI evidence is going to stay—and it will—then the next 12–24 months should show measurable shifts:

- More explicit Rule 702 challenges focused on application, not theory. The rule’s 2023 amendment emphasizes that the proponent must show the expert reliably applied methods to the case facts—not just that the method exists. https://www.uscourts.gov/sites/default/files/22-197_final_package_0.pdf

- Courts demanding stronger authentication records for AI-transformed media. Not because judges love tech, but because Rule 901 forces it when provenance is contested. https://www.law.cornell.edu/rules/fre/rule_901

- Wider use of standardized evaluation language (error rates, test conditions, thresholds). NIST-style benchmarking approaches will become the model for what “serious” validation looks like, especially for face-related claims. https://www.nist.gov/programs-projects/face-recognition-vendor-test-frvt

- Policy and procurement documents adding auditability clauses. The NIST AI RMF is already a common reference point for governance language (“measure,” “manage,” “govern”). Expect it to migrate into contracts. https://www.nist.gov/itl/ai-risk-management-framework

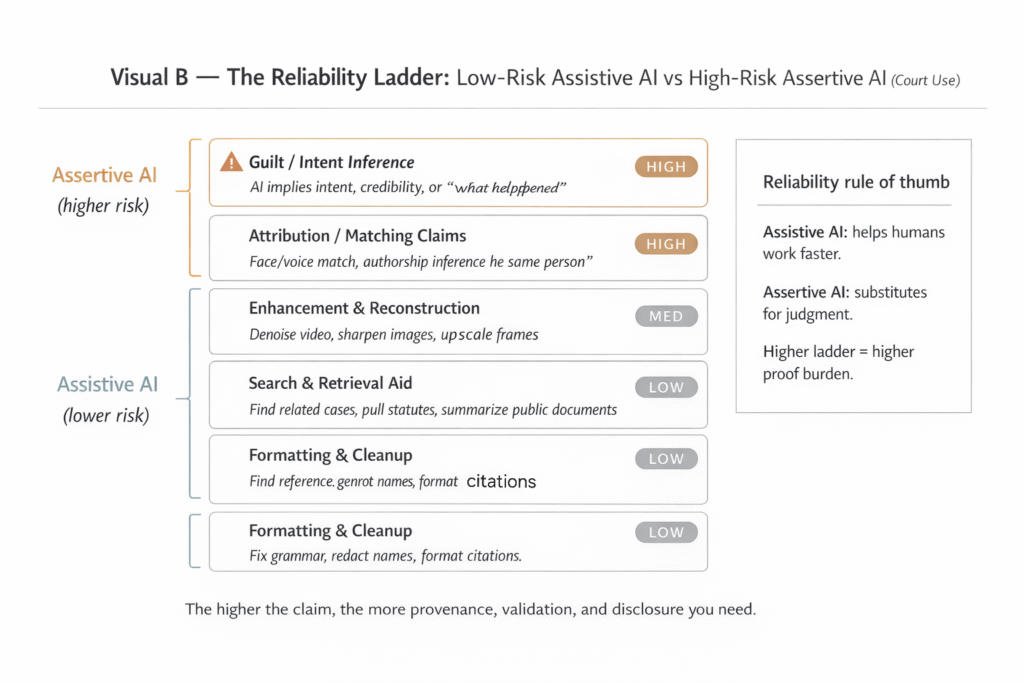

- A split between “assistive AI” and “assertive AI” in courtroom posture. Assistive AI supports human review (triage, search, transcription with verification). Assertive AI makes a claim about identity or intent. Courts will tolerate the first more easily than the second unless validation and transparency improve.

The Decision Checklist (Reader can use tomorrow)

A practical checklist for evaluating AI Evidence in Courtrooms claims

- Ask what rule the evidence is trying to satisfy. Authentication (Rule 901) and expert reliability (Rule 702) are different battles. Start there. https://www.law.cornell.edu/rules/fre/rule_901 and https://www.law.cornell.edu/rules/fre/rule_702

- Demand the original artifact and the full transformation log. If AI “enhanced” something, you need the pre-AI version and the exact process to reproduce it (tool name, version, settings, operator). (Best evidence principle: Rule 1002.) https://www.law.cornell.edu/rules/fre/rule_1002

- Insist on performance evidence in conditions that resemble the case. Lab accuracy claims mean little if the case input is low-quality video or partial audio. NIST FRVT is a concrete model for how condition-specific evaluation should look. https://www.nist.gov/programs-projects/face-recognition-vendor-test-frvt

- Pin down the threshold and what it implies. “Match score” without a threshold and error tradeoff is not evidence; it’s a number. Rule 702 is built to force this kind of clarity. https://www.law.cornell.edu/rules/fre/rule_702

- Check independence: who validated it, and can the other side test it? The National Academies’ forensic science critique is a reminder that self-asserted reliability is historically common—and often wrong. https://nap.nationalacademies.org/catalog/12589/strengthening-forensic-science-in-the-united-states-a-path-forward

- Separate “assistive” from “assertive” outputs. An AI transcript that is verified line-by-line is not the same as an AI identity claim. Treat them differently in court narratives and standards.

- Require a plain-language uncertainty statement. If the expert can’t explain uncertainty without hand-waving, the jury will hear certainty anyway.

The “Minimum Responsible Standard”

Minimum Responsible Standard — AI Evidence in Courtrooms

Requirement 1: Provide original artifact + chain-of-custody + full transformation log.

Requirement 2: Disclose tool name, version, settings, and human operator steps.

Requirement 3: Provide condition-matched validation (error rates, thresholds, test context).

Disclosure: State what the system cannot determine (known blind spots).

Auditability: Allow independent reproduction or meaningful adversarial testing

FAQs

FAQ 1: Is AI-generated evidence automatically admissible in court?

No. Courts don’t admit “AI output” just because it looks professional. In U.S. federal practice, expert testimony must meet reliability requirements under Rule 702, and judges act as gatekeepers (often discussed through Daubert).

FAQ 2: What’s the single biggest weakness of AI-assisted evidence?

Provenance. If you can’t show what the original file was, how it was handled, and exactly what was done to it (tools, versions, parameters), then the output becomes hard to reproduce and hard to challenge responsibly. That’s a credibility problem, not just a technical one.

FAQ 3: Does “enhancing” a video or image with AI count as changing evidence?

It can. Enhancement may be reversible and carefully logged—or it may introduce irreversible changes. Even when the intent is cleanup, the question becomes whether the process is transparent, reproducible, and explainable. Authentication concepts also matter (for U.S. federal practice, see Rule 901).

FAQ 4: What disclosures should a responsible expert report include when AI tools are used?

At minimum: the original file, a process log, the tool name + version, key settings/parameters, and a way to reproduce the output (or clearly explain why reproduction isn’t possible). This aligns with the broader “govern, map, measure, and manage” direction in risk frameworks like NIST’s.

FAQ 5: Are AI transcriptions and translations “safer” than AI doing matching or attribution?

Usually, yes—if the originals are preserved and verification is built in. But “safer” doesn’t mean “risk-free.” The risk jumps when AI shifts from assistive tasks (speeding up work) to assertive tasks (making identity, intent, or credibility claims).

FAQ 6: What should a lawyer or judge ask first when AI is involved?

Ask for: (1) the original source file, (2) chain-of-custody documentation, (3) the exact tool/version used, and (4) whether an independent party can reproduce the result. If those answers are weak, the evidence may still be usable—but the confidence level should drop fast.

FAQ 7: Is there a “standard” for AI evidence in court yet?

Not one universal standard that covers every courtroom and every AI method. What exists today is a patchwork: evidentiary rules, admissibility standards (like Daubert discussions), and general AI risk governance guidance (like NIST’s AI RMF). Expect more formal guidance to emerge, but don’t assume it will be uniform.

Disclaimer: This article is general information for education and discussion. It is not legal advice. If you have a real case, talk to a qualified attorney in the relevant jurisdiction.

By Sami Hayes – AIchronicle Insights