Split-Screen Opening: Two Students, Same Assignment, Different Brains

Student A opens the assignment at 9:02 p.m. and types one sentence into a chatbot: “Write a persuasive essay on whether social media improves democracy.” The first draft appears before the student’s brain even builds a position. The rest of the session is cosmetic work—swap a few phrases, add a quote, soften the tone. By 9:14 p.m., it’s “done.” The teacher later circles a strong paragraph and writes: “Great structure.” No follow-up questions. No friction.

Student B opens the same assignment at 9:02 p.m., but starts by writing three messy bullet points in a notebook two arguments for, one against. The intro is bad. The second paragraph contradicts the first. Ten minutes vanish just trying to say something coherent. Then, and only then, the student uses AI: “Give me counterarguments to this position and point out weak logic.” The tool helps. But the student still has to decide what’s true, what’s relevant, and what to keep.

Both students submit essays that look similar.

Only one of them had to retrieve anything from memory.

That’s the shift. Memorization isn’t “dying” because knowledge is worthless; it’s declining because the workflow is quietly removing retrieval from the loop—and retrieval is what makes knowledge usable under pressure. If you want a policy anchor for why systems should protect learning (not just outputs), UNESCO’s guidance is a solid baseline: https://unesdoc.unesco.org/ark:/48223/pf0000386693

The Three Myths Everyone Repeats About Memorization

Myth 1: “Memorization is rote, so losing it is progress.”

What’s true: Memorization isn’t one thing. There’s shallow repetition, sure. But there’s also retrieval practice, where pulling knowledge out strengthens it and makes it easier to use later. That “testing effect” isn’t a motivational slogan; it’s a robust finding in cognitive psychology. Start with Roediger & Karpicke’s classic paper: https://doi.org/10.1111/j.1467-9280.2006.01693.x

Why it matters: If AI reduces retrieval opportunities, students may still produce fluent work while their ability to think without scaffolding weakens.

Myth 2: “If you can look it up, you don’t need to remember it.”

What’s true: External memory helps, but it also changes what the brain stores. The “Google effect” (transactive memory with search) suggests people may remember where to find information more than the information itself. See Sparrow, Liu, & Wegner in Science: https://doi.org/10.1126/science.1207745

Why it matters: In real exams, interviews, lab benches, and emergencies, “I can look it up” isn’t always available—or fast enough. What survives is what you can retrieve.

Myth 3: “AI tutoring will replace practice.”

What’s true: Tools can support practice, but they don’t automatically create the desirable difficulty that learning needs. Students often confuse fluency (reading a good explanation) with competence (reproducing it). Retrieval practice tends to outperform “elaborative” studying for long-term learning in controlled comparisons. For a clear demonstration, see Karpicke & Blunt: https://doi.org/10.1126/science.1199327

Why it matters: If AI becomes the first draft, the student’s brain often shifts from building to polishing. That feels productive. It’s not the same thing as learning.

The Memory Budget: What AI Steals, What It Saves, What It Replaces

Here’s the simplest framework I’ve found useful when watching AI rollouts in real classrooms: students have a limited memory budget. Time, attention, and effort are finite. AI doesn’t just “help.” It reallocates that budget.

Think in three buckets:

- Stored knowledge (fast recall): facts, vocabulary, formulas, historical anchors.

- Retrieval strength: the ability to pull knowledge out when you’re stressed, timed, or distracted.

- Access knowledge: knowing how to search, verify, and stitch sources responsibly.

AI can improve bucket #3. It can even support #1 by generating drills and examples. But the danger zone is #2. Retrieval strength grows when students repeatedly pull from memory, not when they repeatedly recognize a good answer on a screen.

This is where cognitive offloading becomes more than a buzzword. When people rely on external tools to hold information, they often reduce internal storage and retrieval effort. A useful overview is Risko & Gilbert’s review on cognitive offloading: https://doi.org/10.1016/j.tics.2016.07.002

In an AI-first workflow, students spend less time generating ideas from scratch and more time selecting, editing, and smoothing. Editing isn’t “bad.” But it draws from different mental muscles. You can edit your way to an A-minus while your retrieval strength quietly erodes.

Artifact Walkthrough: What a “Good” AI-Assisted Submission Hides

Take a polished history essay. It has a thesis, counterarguments, even citations. It reads like someone who knows the material.

Now look for learning evidence—especially the kind teachers used to see accidentally:

- Where are the false starts?

- Where is the rough outline that shows the student’s structure changing?

- Where are the imperfect intermediate claims that get repaired?

- Where is the student’s own recall—dates, names, causal chains—appearing before the tool fills them in?

Often, it’s missing because the workflow started with a draft. And when the first draft isn’t yours, the work stops being “thinking in public.” It becomes “quality control.”

This is why assessment validity is suddenly the real battlefield. The U.S. Department of Education’s Office of Educational Technology has been blunt about AI forcing changes in teaching and evaluation systems, not just classroom tools. Their report is worth reading end-to-end: https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf

The uncomfortable implication is that good-looking artifacts may become less diagnostic. The output improves. The teacher’s visibility declines.

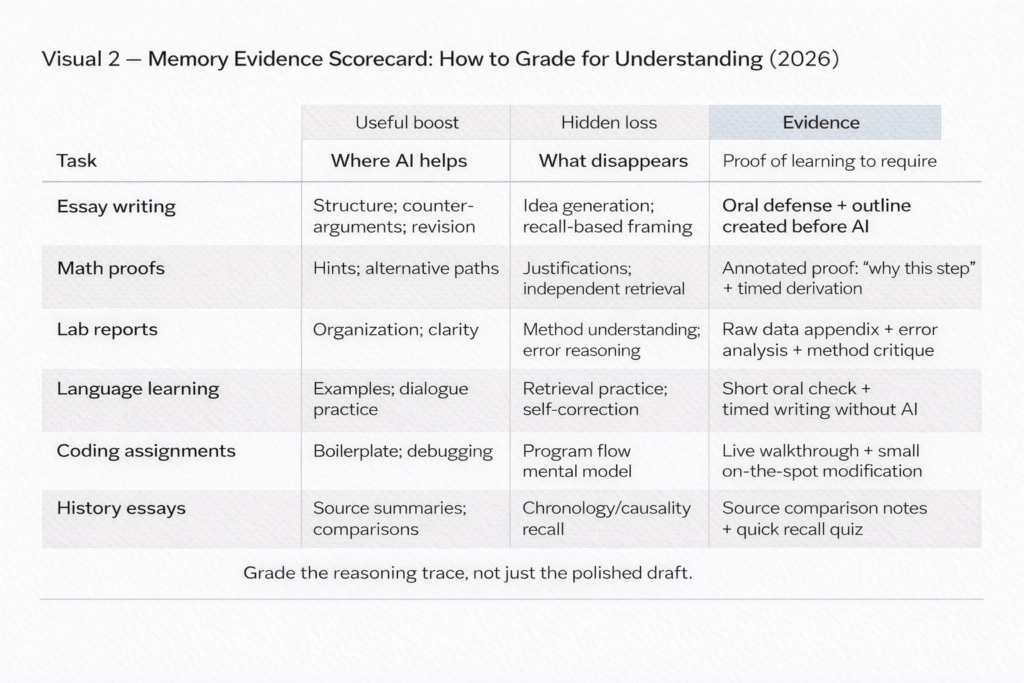

The One Table That Schools Actually Need

Memory Evidence Scorecard

| Task | Where AI genuinely helps | What disappears (memory/retrieval evidence) | What to require as proof of learning |

|---|---|---|---|

| Essay writing | Structure, counterarguments, revision suggestions | Idea generation, recall-based framing, personal synthesis | Oral defense (2–3 minutes) + outline created before AI use |

| Math proofs | Hints, alternative solution paths | Justification for steps, independent retrieval of lemmas | Annotated proof: “why this step” for each line + timed mini-derivation |

| Lab reports | Organization, language clarity | Understanding of methods, error reasoning, data interpretation | Raw data appendix + error analysis + “what surprised me” explanation |

| Language learning | Examples, dialogue practice | Retrieval practice, productive struggle, self-correction | Short oral check + timed writing without AI + spaced recall quizzes |

| Coding assignments | Boilerplate, debugging suggestions | Mental model of program flow, independent problem decomposition | Live walkthrough + small on-the-spot modification + test-case explanation |

| History essays | Source summaries, comparison scaffolds | Recall of chronology/causality, independent synthesis | Source comparison notes + “what changed my mind” reflection + quick recall quiz |

A Design Pattern, Not a Ban: “Attempt → Assist → Audit”

Banning AI is a fantasy in most environments. Full adoption without structure is worse. The middle path is a designed workflow that preserves retrieval while allowing tool benefits.

Attempt: Students start unaided for a fixed window (even 8–12 minutes). They must produce something from memory: an outline, a partial proof, a plan, a first paragraph.

Assist: AI is allowed to critique, expand, or provide counterexamples—not replace the first cognitive step.

Audit: Teachers verify with fast “proof of learning” checks that are hard to outsource.

This is not just classroom ideology; it aligns with emerging governance thinking. OECD’s review of how countries are approaching generative AI in education shows the policy gravity pulling toward structured use rather than simplistic permission/ban binaries: https://www.oecd.org/en/publications/oecd-digital-education-outlook-2023_c74f03de-en/full-report/emerging-governance-of-generative-ai-in-education_3cbd6269.html

Audit checks that take under 5 minutes

- Ask for a one-minute oral summary without notes

- Require a “concept map from memory” (then compare to sources)

- Have students explain one paragraph’s logic step-by-step

- Give a tiny timed recall quiz (3 questions) tied to the assignment

- Ask “what would change your conclusion?” and require two conditions

- Require an annotated outline that predates AI use

- Ask for one deliberate error the student initially made and corrected

The Real Equity Problem Isn’t Cheating. It’s Private Scaffolding.

The equity gap shows up long before discipline hearings.

Some students have quiet advantages: paid tutoring, parent editing, private study space, newer devices, faster internet, and enough privacy to iterate prompts without embarrassment. Others share devices, share rooms, and optimize for speed because time is scarce. AI doesn’t create inequality, but it can compound it by making “private scaffolding” cheaper and more scalable.

This is also where the “detector” era starts to collapse socially. Even when detection tools improve, the incentives don’t. Schools end up in suspicion loops that punish the wrong people or create chilling effects on legitimate assistance (especially for multilingual students). Many universities now publicly acknowledge limitations and risks of AI detection tools; Brandeis summarizes the reliability and bias concerns and points to supporting studies: https://www.brandeis.edu/ai-steering-council/ai-literacy/ai-teaching-learning/detection-tools.html

If you want the broader classroom dynamic—how teachers get pushed into “validator” roles—this connects directly to AIChronicle’s piece, What Happens When Students Learn From AI First, Teachers Second.

And for practical student workflows that can protect learning (when used with discipline), AIChronicle’s guide How to Use ChatGPT for Studying, Homework, and Notes pairs well with the “Attempt → Assist → Audit” model.

What I’d Watch for in near future

I wouldn’t bet on a single “solution.” I’d watch for signals that systems are rebuilding retrieval on purpose:

- Oral defenses and short vivas returning as standard practice

- Timed retrieval checks woven into regular coursework (not just exams)

- Process portfolios that require intermediate artifacts (drafts, logs, reasoning traces)

- AI literacy standards that treat tools as powerful—but not authoritative

Here’s a testable prediction: by 2028, more schools will require at least one “secure proof-of-learning” assessment per course (oral, in-person, or proctored reasoning), not because they hate AI, but because they can’t grade learning reliably without it. Regulators and education agencies are already nudging institutions toward assessment redesign, and the policy direction is visible in national guidance like the U.S. DOE report: https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf

If that shift happens, memorization won’t “come back” as rote. It will come back as retrieval evidencebecause education systems can’t run on polished output alone.

By Sami Hayes – AIchronicle Insights

Pingback: When Students Learn From AI Before Teachers.