Cold Open: A Classroom Pattern You Can Feel

The new “AI allowed” policy is printed on a single sheet of paper and taped to the classroom door. It looks harmless. It’s also doing more work than the teacher.

Inside, a student turns in an essay that reads like a polished op-ed: clean structure, confident tone, tidy citations, and zero awkward sentences. Time spent: eight minutes. The teacher doesn’t need a detector. The writing is too smooth, too unearned, and—this is the weird part—too emotionally flat.

The teacher asks a simple follow-up question: “What’s your thesis in one sentence?” The student freezes. Not for long. They glance down at their laptop as if the answer is stored there.

This isn’t rare anymore. It’s becoming a standard classroom rhythm: output first, understanding later—if later ever arrives. Policymakers are trying to respond, but the guidance is still catching up to what teachers are living. UNESCO’s global guidance on generative AI in education is a good baseline, especially for risk framing and human-centered guardrails: https://www.unesco.org/en/articles/guidance-generative-ai-education-and-research

The Hidden Reordering: When the First Draft Isn’t Yours

When AI is the first step, learning quietly changes shape

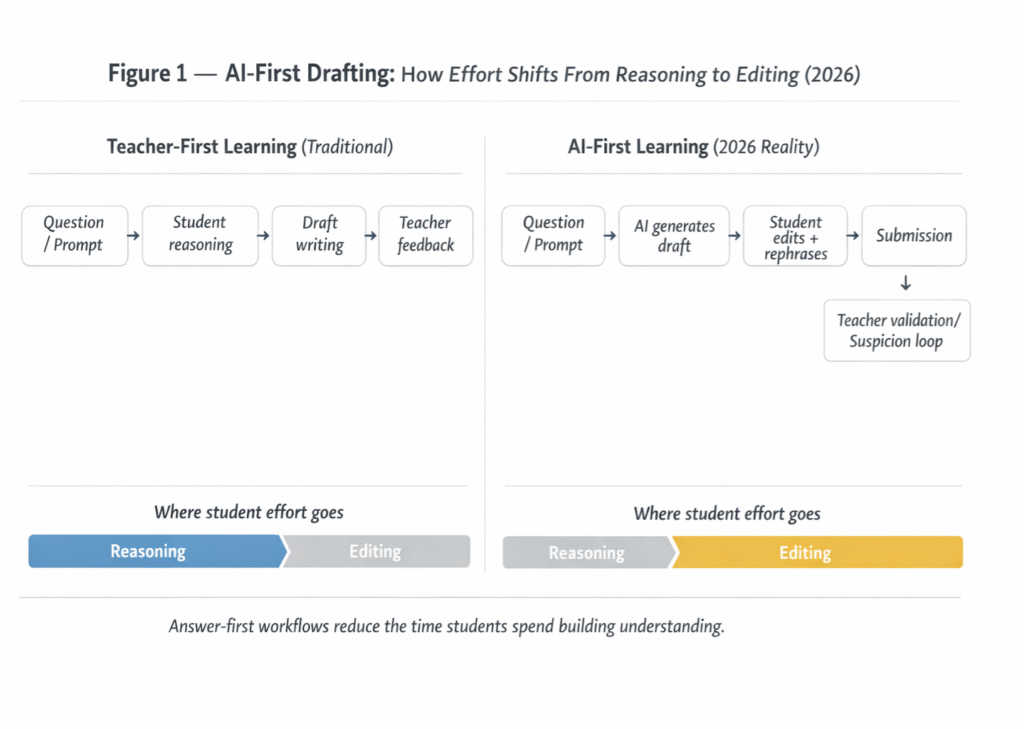

When AI is the first step, learning quietly changes shape not because students “cheat.” Because the order of cognition flips. The student’s mental model forms around the machine’s framing—its choice of categories, its confidence, its default explanation style. That matters. In learning science, sequencing is not decoration. It’s architecture.

In an “answer-first” workflow, students don’t begin with uncertainty and exploration. They begin with something that looks like certainty. And once the first draft arrives fully formed, the student’s job often becomes “editing,” not reasoning. The thinking that should have happened upstream gets replaced by post-hoc cleanup.

This is a close cousin of automation bias: when a system presents a recommendation, humans tend to overweight it, monitor less, and accept more—especially under time pressure and cognitive load. That pattern is documented well in human factors research and shows up strongly in decision-support contexts: https://pubmed.ncbi.nlm.nih.gov/21077562/

A broader open-access review of automation bias dynamics (including frequency and effects) is here: https://pmc.ncbi.nlm.nih.gov/articles/PMC3240751/

In classrooms, the same psychological gravity applies. If the tool reliably produces “good-enough” answers, students learn a new habit: don’t wrestle first. Ask first. Then patch the output until it passes.

I’ve watched students who are perfectly capable of doing the work stop doing the work—not because they became lazy overnight, but because the workflow rewarded speed and surface quality. The machine offered a shortcut, and the class system quietly validated it.

Three Failure Cases Schools Don’t Notice Until Grades Collapse

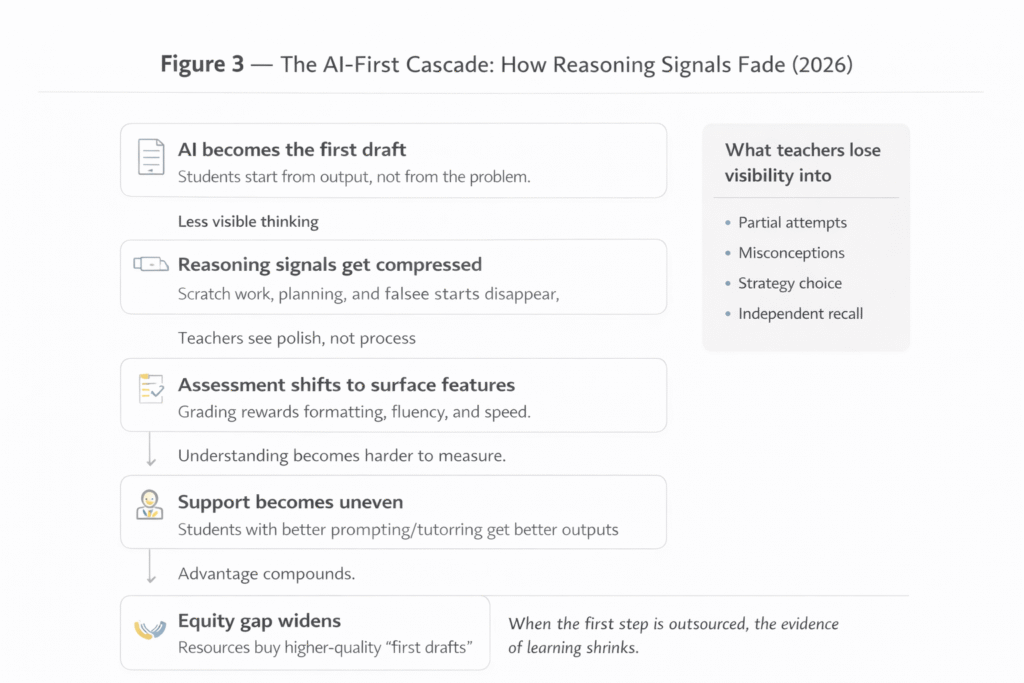

The danger isn’t one dramatic scandal. It’s slow damage, distributed across thousands of “fine” assignments.

Failure Case 1: Surface Mastery (Looks Smart,but Isn’t)

A student uses AI to draft a history essay. It earns an A. The structure is clean. The vocabulary is advanced. The citations look plausible. Then the exam arrives—short-answer, in-class, no devices—and the same student can’t explain basic causal links.

What the teacher sees: a strong writer whose test performance is oddly inconsistent.

What’s actually happening: the student learned to produce outputs that resemble understanding without building the underlying schema.

Failure Case 2: Feedback Loop (Students Inherit the Tool’s Blind Spots)

A student uses AI as a tutor for math proofs. The model is confident, but occasionally wrong in subtle ways: skipping justification steps, assuming a property without proving it, or “hand-waving” a transition that should be explicit.

The student doesn’t learn math. They learn the model’s style of being wrong while sounding right. And because the output is fluent, the student’s internal error-checking doesn’t engage.

What the teacher sees: a student who can “explain” but can’t prove.

What’s actually happening: the student’s standard for rigor gets quietly lowered to the model’s standard.

Failure Case 3: Equity Gap (Prompting Becomes a Private Tutor)

Students with time, money, and guidance learn to use AI strategically: outlining, iterating prompts, verifying citations, and cross-checking against rubrics. Students without that support often use AI as a one-shot answer machine. Same tool. Different outcomes.

This is exactly the kind of uneven impact OECD warns about when discussing governance and equity risks tied to generative AI in education: https://www.oecd.org/en/publications/oecd-digital-education-outlook-2023_c74f03de-en/full-report/emerging-governance-of-generative-ai-in-education_3cbd6269.html

What the teacher sees: “some students use AI responsibly, others don’t.”

What’s actually happening: advantage compounds, because AI literacy is becoming an invisible prerequisite.

The Assessment Rubric That Actually Works in 2026

If AI is in the room, the assessment has to change. Not by banning everything. By grading for what AI can’t fake easily: reasoning traces, constraints, and ownership of decisions.

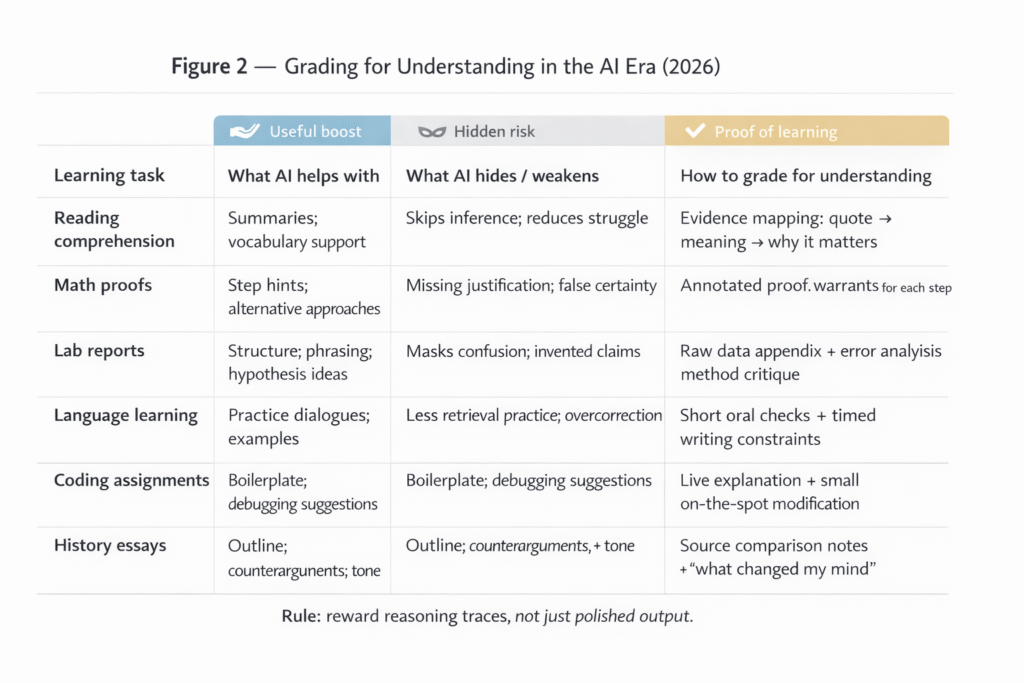

Rubric Table:

| Learning task type | What AI helps with | What AI hides or weakens | How to grade for understanding |

|---|---|---|---|

| Reading comprehension | Summaries, vocabulary support, discussion prompts | Skips struggle; replaces inference with paraphrase | Require “evidence mapping”: quote + interpretation + why it matters |

| Math proofs | Step suggestions, alternative solution paths | Missing justification; false confidence | Grade the “why”: require annotated proof with explicit warrants per step |

| Lab reports | Draft structure, phrasing, hypothesis ideas | Masks confusion; invents plausible-sounding claims | Require raw data appendix + error analysis + method critique |

| Language learning | Practice dialogues, feedback, examples | Overcorrects; reduces retrieval practice | Short oral checks + timed writing with restricted tools |

| Coding assignments | Boilerplate, debugging hints, refactors | Shallow understanding; copy-paste fluency | Require live explanation of tradeoffs + small code modifications on demand |

| History essays | Outline, counterarguments, tone | Citation laundering; superficial synthesis | Require source comparison notes + “what changed my mind” reflection |

This approach lines up with the U.S. Department of Education’s emphasis on aligning AI use with teaching and assessment goals (and being explicit about acceptable use): https://www.ed.gov/sites/ed/files/documents/ai-report/ai-report.pdf

Signals a student is learning ‘through’ AI, not ‘with’ AI

- They can polish language but can’t restate the core idea in plain words.

- Their drafts improve instantly, but their in-class reasoning doesn’t.

- They accept incorrect explanations if the tone sounds confident.

- They struggle to answer “why this step?” without checking a device.

- They submit work with citations they can’t describe or locate.

- Their work shows consistent structure across unrelated assignments (same “AI voice”).

- They perform far better on take-home tasks than on constrained, timed tasks.

Contrarian Take: Banning AI Isn’t the Fix (And Neither Is “Full AI”)

A ban feels satisfying. It also creates a black market of invisible use and punishes honest students who disclose help. On the other hand, the “embrace it fully” crowd is often selling a fantasy: that access to AI automatically creates better learning.

Neither extreme matches what actually works.

The third option is structured friction: designing checkpoints that force cognition back into the process. Not vague “be ethical” posters. Real constraints. Example: require students to submit a short reasoning log (what they tried, what failed, what they changed) and do a two-minute oral defense of one paragraph. If they can’t defend it, it doesn’t count—no matter how pretty it looks.

UNESCO’s guidance leans in this direction by emphasizing human-centered use, capacity building for teachers, and governance rather than panic bans: https://unesdoc.unesco.org/ark:/48223/pf0000386693

Real-World Context: Who Benefits When Teachers Become Validators

There’s a power shift happening inside education, and it’s not subtle.

Edtech vendors benefit when schools treat AI as a productivity tool first and a learning tool second. Districts benefit (short-term) when AI reduces grading load and paperwork. Universities benefit when policies look “modern” and defuse student pressure. Students benefit when the reward system is grades and speed.

But teachers pay the cost when they become validators of machine-assisted output instead of mentors of thinking.

This is why “AI detectors” became such a tempting escape hatch—and why trust in them is wobbling. OpenAI discontinued its own AI text classifier citing low accuracy: https://openai.com/index/new-ai-classifier-for-indicating-ai-written-text/

Stanford HAI has also highlighted how detectors can be biased against non-native English writers, which turns enforcement into a fairness problem: https://hai.stanford.edu/news/ai-detectors-biased-against-non-native-english-writers

And many teaching centers have warned that detection tools are not reliable enough to use without substantial risk of false accusations—here’s one clear example from the University of Pittsburgh’s teaching guidance: https://teaching.pitt.edu/resources/encouraging-academic-integrity/

So teachers are stuck. If they rely on detectors, they risk injustice. If they ignore AI use, they risk hollow learning. That’s why assessment design is becoming the real battlefield.

If you want a deeper connection to what this does to basic cognitive skills, pair this article with The decline of memorization in an AI-assisted world (https://theaichronicle.org/the-decline-of-memorization-in-an-ai-assisted-world/). And if you’re trying to steer students toward responsible workflows instead of shortcuts, this companion guide helps: How to use ChatGPT for studying, homework, and notes (https://theaichronicle.org/how-to-use-chatgpt-for-studying-homework-and-notes/).

The Analyst’s Verdict: 2027–2028

By 2027, the most credible schools won’t be the ones with the strictest bans. They’ll be the ones with the clearest proof-of-learning standards.

Here’s a testable prediction: by 2028, more secondary schools and universities will shift a meaningful portion of writing-heavy courses toward hybrid assessment—short oral defenses, process logs, and constrained in-class tasks—because AI-generated fluency will keep outpacing traditional take-home grading. You’ll see it framed less as “anti-AI” and more as “assessment validity.”

OECD’s broader direction—promoting forward-looking guidance, monitoring impact, and building educator capacity—supports that trajectory toward governance and redesign rather than denial: https://www.oecd.org/en/topics/sub-issues/artificial-intelligence-and-education-and-skills.html

The schools that win won’t “fight AI.” They’ll force learning to leave fingerprints—reasoning traces that a model can’t conjure on demand.

By Sami Hayes – AIchronicle Insights

Pingback: AI Is Rewriting Memory: Why Recall Is Fading in School