The New Default: Assume It’s Optimized, Not True

Online content used to be “published.”

Now it’s produced.

Not just by bots. By incentive machines.

A post can be wrong and still feel perfect: clean tone, confident cadence, neat formatting, plausible detail, and just enough “sources” to stop the average reader from checking.

That’s the shift. Believability is being optimized like a product metric.

This is why provenance standards are suddenly showing up in serious conversations about trust: they’re an attempt to restore “where did this come from?” to a media environment that keeps stripping that question away.

C2PA (Coalition for Content Provenance and Authenticity) specification

A Practical Vocabulary for Suspicious Reading

Think of this as a working vocabulary. Not academic. Not cute. Just useful.

Provenance

Where a piece of media came from and what happened to it on the way to you. In practice, provenance means cryptographic and workflow “receipts,” not vibes.

C2PA provenance standard (technical specification)

Chain of custody

A record of handling: who collected it, who stored it, who transformed it, when, and under what controls. Courts and incident responders care because gaps create doubt and invite tampering claims.

NIST SP 800-86 (forensics techniques + evidence handling)

Context collapse

When something true in one context becomes misleading in another. A clipped video can be “real” and still function as a lie once the surrounding conditions are removed.

“Context Collapse” research overview (Oxford Academic / JCMC)

Model hallucination

When a model outputs something that looks like knowledge but isn’t grounded in the input or reality. The danger isn’t that it’s random—it’s that it’s coherent.

NIST AI RMF (reliability + risk framing for AI outputs)

Synthetic consensus

A false sense that “everyone agrees” created by repeated AI-generated summaries, recycled phrasing, and copied narratives across platforms. It’s not mass agreement. It’s mass replication.

Attribution gap

When you can’t reliably answer “who is responsible for this claim?”—because it’s reposted, anonymized, AI-assisted, or laundered through layers of accounts and aggregation.

EU Digital Services Act overview (systemic risk + platform duties)

Confidence theater

Presentation tricks that manufacture certainty: overly precise numbers, “expert tone,” fake citations, glossy charts, confident voiceover, authoritative layout. The output feels audited even when it’s not.

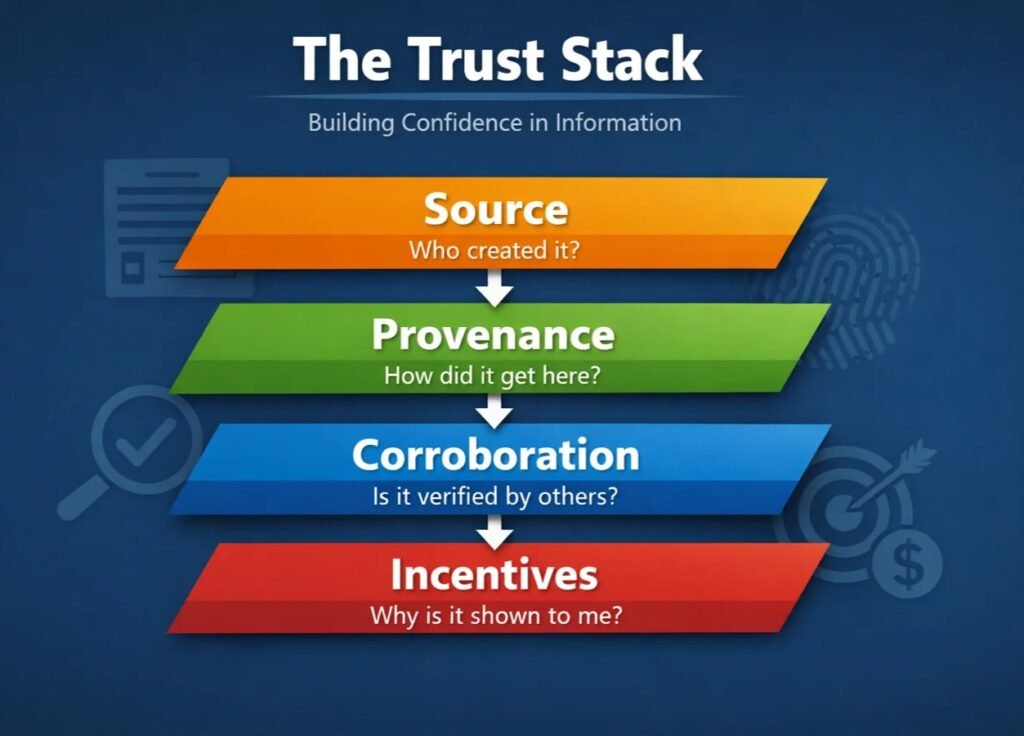

The Three-Layer Test: Source, Story, System

You don’t need a full verification lab to read better.

You need a consistent mental model.

Three layers. Run them in order.

Layer 1: Source (Who is talking?)

Start with identity and accountability, not the claim.

Does the source have a stable presence? A real organization page? A domain you can verify? A documented track record? Are they making a claim that would normally require editorial standards, peer review, or institutional backing?

And if it’s an “official” account: official according to whom? The platform badge? The bio text? A link you can verify off-platform?

If you’re dealing with endorsements, paid influence, or “trust me, I’m affiliated,” regulators care about disclosure—and readers should too.

FTC Endorsement Guides (official guidance hub)

Layer 2: Story (What is being claimed?)

Now read the claim like an auditor reads an invoice.

Is the claim falsifiable? Does it cite primary documents? Does it give dates, locations, methods, or only vibes? Does it have numbers with no boundaries (“X% increase” — increase in what, measured how, over what period)?

The classic tell is fake specificity: precise figures, exact quotes, and confident conclusions with no path for you to reproduce the reasoning.

Another tell: claims that “close the loop” too neatly. Real events are messy. Real investigations contain uncertainty. Real science is full of caveats.

Layer 3: System (Why is it shown to you?)

Even accurate content can be delivered to you for bad reasons.

Recommender systems reward watch time, rage, and certainty. Search rankings reward pages that look authoritative. AI answer boxes reward compact narratives—even when reality is complex.

So the question becomes: what incentive shaped this? Engagement? Conversions? Politics? A competitor’s takedown? A content farm trying to rank? A platform trying to keep you from clicking out?

Regulators are increasingly framing this as a systems issue, not just “bad posts.”

EU Digital Services Act (risk obligations + transparency framing)

Four Failure Modes You’ll See Weekly

1. “Screenshots as authority”

A screenshot circulates: a “policy,” a “breaking news alert,” a “chat log,” a “dashboard.” It looks like evidence. It travels like evidence. But it’s often the least verifiable form of evidence.

Example (realistic): A screenshot of a “government notice” spreads on social media, but there’s no document number, no hosting domain, and no archive trail.

Hidden mechanism: Screenshots remove URLs, metadata, and navigable context. They’re designed for frictionless sharing, not verification.

The tell: No primary source link, no independent reproduction, and the screenshot is the only artifact.

NIST guidance on handling digital evidence + forensics context

2. “Citations that don’t say what they claim”

This is citation laundering: real-looking references attached to claims they don’t support. Sometimes the cited page exists but says something weaker. Sometimes it’s a general report used to “bless” a specific claim. Sometimes it’s irrelevant, but most readers won’t click.

Example (realistic): A post cites a reputable institution, but the linked report is about a different country, a different year, or a different metric.

Hidden mechanism: The citation is used as a trust token, not as evidence.

The tell: Quoted lines aren’t findable, the numbers don’t match, and the claim can’t be located inside the cited document.

OECD guidance and work on information integrity / mis- and disinformation policy context

3. “AI voice/video that passes casual checks”

People still think “deepfake” means obvious glitches. That’s dated. The more common risk now is content that’s good enough to pass casual viewing and short enough that nobody scrutinizes it.

Example (realistic): A short clip shows a public figure “saying” something inflammatory; it’s framed as breaking news; it spreads faster than correction.

Hidden mechanism: Low-friction formats + high-arousal content + algorithmic amplification. The system doesn’t need perfection—just speed.

The tell: No full-length source video, no original upload trail, no provenance info, and no corroboration by primary outlets.

C2PA (how provenance can be attached to media)

4. “Official-looking accounts with rented credibility”

Verification badges drifted from “identity checks” toward a mix of identity, subscription status, and notability signals depending on platform. Meanwhile, account takeovers, lookalike handles, and brand impersonation kept scaling.

Example (realistic): A “verified” account posts investment advice, medical claims, or “leaked documents,” and people share it because the badge short-circuits skepticism.

Hidden mechanism: Users treat platform UI as a trust guarantee; platforms treat badges as a product feature or policy artifact.

The tell: The account has weak off-platform presence, inconsistent history, and no stable domain-based confirmation.

FTC consumer guidance on spotting and reporting scams (identity + impersonation patterns)

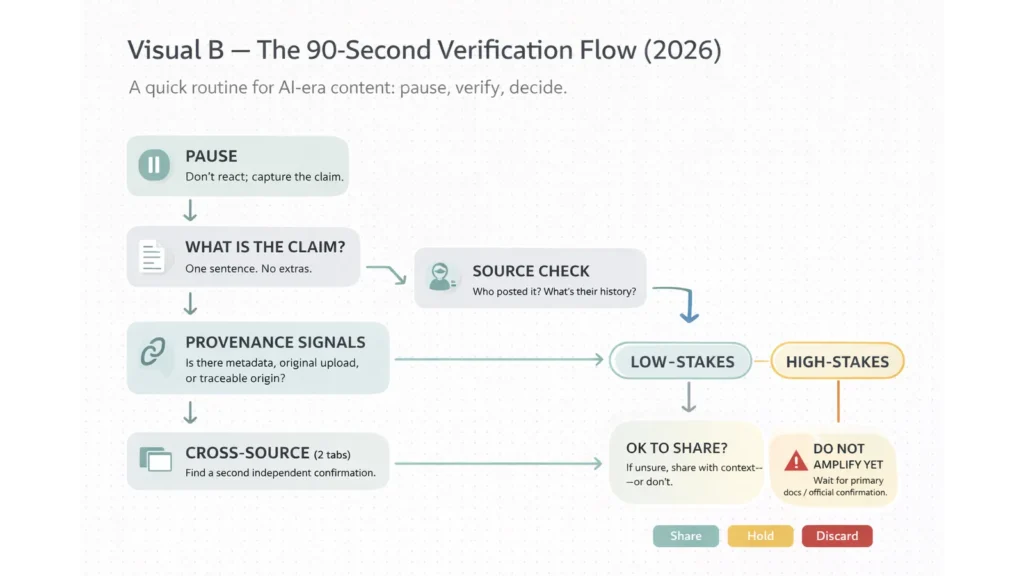

A Reader’s Field Protocol (Do This In 90 Seconds)

If you only do one thing, do this: slow down long enough to run the test.

“Fast” is how synthetic media wins.

“Receipts” is how it loses.

90-second verification moves

Check whether the claim links to a primary source (original document, dataset, full video), not just commentary.

Reverse-search key phrases or distinctive frames to see if the content existed earlier in a different context.

Look for provenance signals (content credentials / attached receipts) when available—treat absence as “unknown,” not “fake.”

If it’s a screenshot, demand the underlying URL, document number, or archive link before sharing.

Cross-check with at least one independent institution/outlet that would be accountable for corrections.

Watch for “fake specificity”: exact numbers and quotes without a method trail.

When stakes are high, favor sources with transparent standards and correction policies over viral accounts.

C2PA (provenance basics + spec entry

And yes: this is exactly why Why verified no longer means trusted online matters here. Badges reduce friction. Verification is not the same thing as trust.

What Changes When Everyone Reads This Way

Newsrooms shift first. Not because they love extra work, but because the cost of being wrong has gone up while the speed of false narratives has increased.

You’ll see more “show the receipt” journalism: publishing primary documents, embedding source links, sharing full clips, and explaining what’s unknown. You’ll also see fewer stories built on anonymous virality unless there’s hard corroboration.

Education shifts next. I’ve watched a school move from “turn in the essay” to “turn in the process,” because polished output stopped correlating with understanding. The practical response is boring and effective: oral checks, process notes, annotated drafts, and timed reasoning tasks.

Platforms are forced into the conversation. Not because they want to be referees, but because provenance UI and citation panels are becoming part of legitimacy. The public is asking for signals that are harder to fake than a badge.

EU Digital Services Act (platform transparency + systemic risk direction)

The Hard Truth: Suspicion Has Costs

Suspicion is not free.

It costs time. It costs attention. It costs social energy. And the people who can afford those costs are not distributed evenly.

A high-skill reader with time can run checks, pull primary sources, and compare coverage. A tired parent on a commute can’t. A small-business owner juggling payroll can’t. Suspicion becomes a privilege.

There’s also a psychological cost: if readers are trained to doubt everything, some slide into “nothing is real,” which is not skepticism—it’s surrender. Bad actors love that outcome because it collapses the idea of proof.

So the goal isn’t paranoia. It’s calibrated suspicion: treat uncertainty honestly, demand receipts for high-stakes claims, and accept that some things remain unresolved without declaring everything fake.

This is where Who audits the algorithms becomes the bigger policy question. When distribution systems decide what gets seen, “media literacy” alone can’t carry the burden.

A Minimum Standard for Trustworthy Content

Minimum Trust Standard — Public Claims

1. Claim: …

2. Primary source link: …

3. Date + location context: …

4. Method: …

5. Uncertainty: …

6. Incentive/affiliation: …

7. Reproducibility/check: …

8. Provenance note (if synthetic media): …

By Sami Hayes – AIchronicle Insights