The Line Everyone skipped

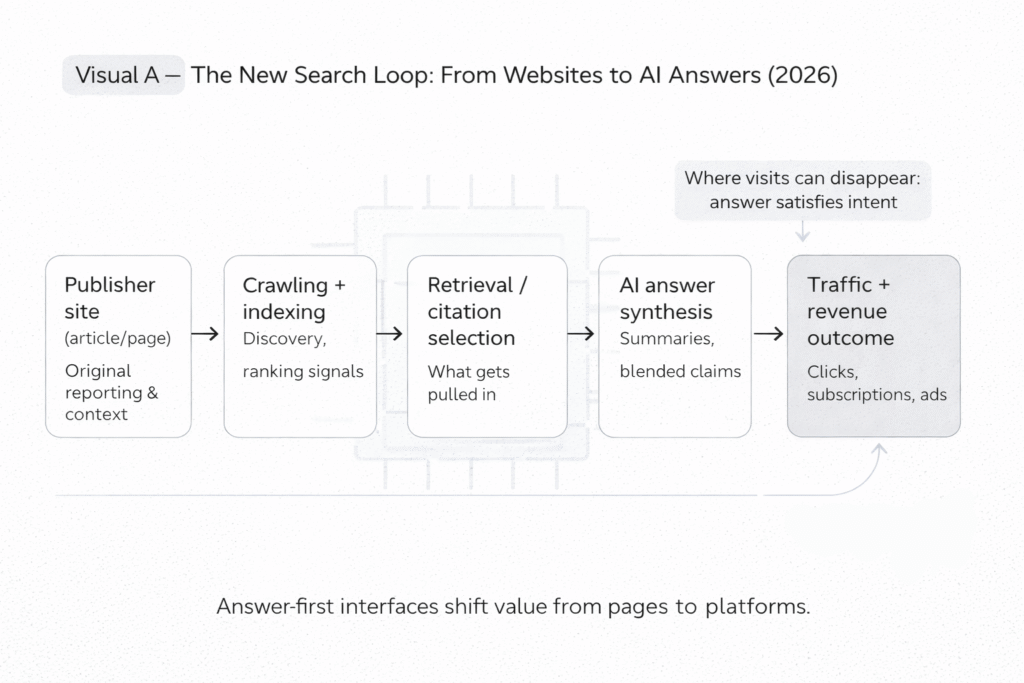

A quiet product change is turning into a loud economic shift: search engines are increasingly answering questions on the results page, instead of sending people out to websites.

You can see the direction in how Google AI Overviews / AI Mode are framed: the search page becomes the destination, and links become supporting evidence rather than the main path.

That’s not automatically “bad.” For users, it can be faster. For publishers, it can be brutal. For everyone, it changes what “being found” even means.

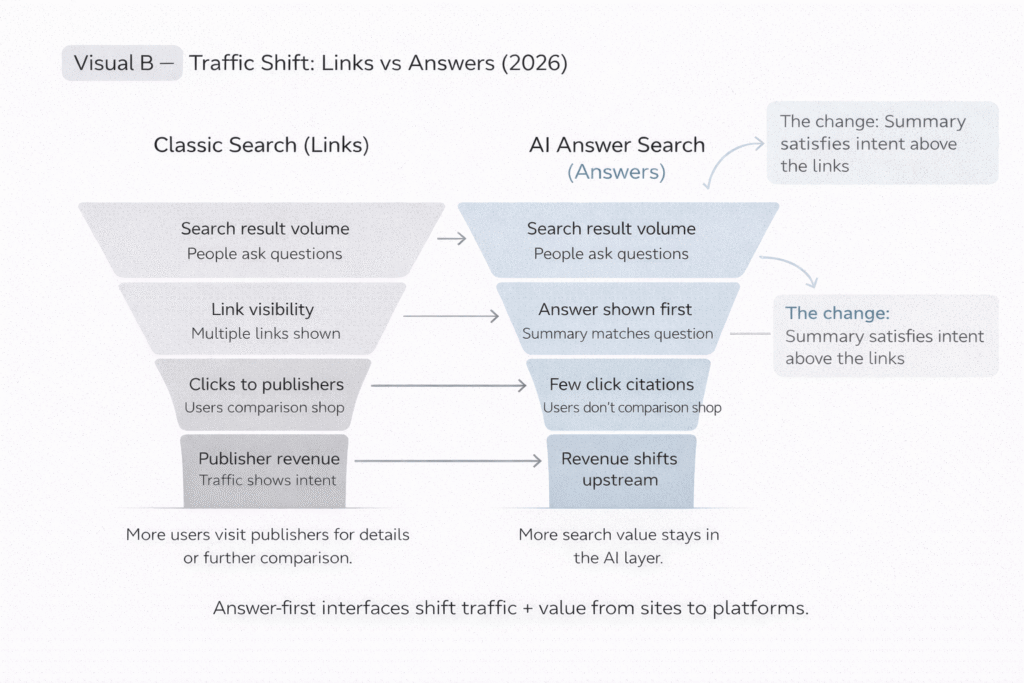

The hard part is that the change is uneven. Some queries still behave like classic search. Others become answer-first, with fewer clicks, fewer pageviews, and fewer chances for a website to earn trust directly.

And if your site is funded by ads, subscriptions, or affiliate revenue, that click is the lifeblood.

What People Get Wrong About This Topic

Wrong assumption: “If the AI mentions my site, I’ll still get the click.”

Reality check: Pew Research Center (Data Labs) found that when an AI summary appears, users are less likely to click links in the search results. If fewer people click, “visibility” can turn into a hollow metric.

Wrong assumption: “AI answers are basically featured snippets, just longer.”

Reality check: AI answers are composed outputs. That changes the user’s behavior and expectations: they often stop at the summary, and only click when they want to verify, compare, or go deeper. Google’s own descriptions of AI Overviews emphasize faster help inside search, not referrals out.

Wrong assumption: “Citations solve the trust problem.”

Reality check: citations help, but they don’t guarantee that the summary is faithful, complete, or context-correct. The most important question becomes: can you reproduce what the system did, and can you audit it? That’s the same transparency problem NIST flags broadly in risk management guidance: documentation, transparency, and governance matter when outputs shape decisions.

Wrong assumption: “This is just an SEO problem.”

Reality check: it’s also a market-structure problem. If answers shift “upstream” into the platform, the platform controls the interface, the attribution, and the monetization choke points. That’s exactly why the EU treats large platforms and search engines as special cases under the Digital Services Act transparency reporting regime.

The Mechanism Map (How It Actually Works)

Moving Part 1: Answer Composition

What it is

Answer-first search uses AI to generate a direct response on the results page, sometimes with citations.

What fails in real environments

The summary can compress nuance, flatten uncertainty, or merge multiple sources into a single “confident” voice. Even when links exist, many users don’t open them, so mistakes can travel farther than they used to.

What signals it’s failing

You see mismatched context, overgeneralization, and claims that are hard to verify from the cited sources. When that happens, users may stop trusting both the platform and the publishers.

Reference: Pew Research Center (Data Labs) on reduced clicking with AI summaries

Moving Part 2: Attribution and Link Economics

What it is

Attribution is how the system signals “where this came from,” usually through citations or link cards.

What fails in real environments

Attribution can become decorative: present, but not persuasive enough to earn the click. If the summary satisfies the intent, the link becomes optional—and “optional” is often “ignored.”

What signals it’s failing

Publishers see impressions stay stable or rise while clicks drop. Brand search may not increase even when content is “used” in summaries.

Reference: Google’s product framing for AI summaries in Search

Moving Part 3: Feedback Loops and Market Pressure

What it is

Platforms optimize for user satisfaction and session efficiency; publishers optimize for traffic and conversion.

What fails in real environments

If publishers lose traffic, they invest less in original reporting and niche expertise. That can degrade the very “web knowledge base” AI summaries draw from—making answers worse over time, or more reliant on a shrinking set of big sources.

What signals it’s failing

More “thin” content farms chasing what the AI tends to cite; fewer deep explainers and local reporting; heavier paywalls; more aggressive monetization tactics.

Reference: NIST AI RMF Playbook on governance and risk controls (broad but relevant to system-level harms)

The Incentive Layer (Follow the Human Motives)

Users want speed. They’re not wrong.

Platforms want retention. They’re not shy about it either.

Publishers want credit and revenue for the work. Also not wrong.

The conflict shows up in what organizations do when money is on the line: dashboards, KPIs, procurement decisions, and legal exposure. When a platform can keep the user on-platform and sell the ad next to the answer, the platform’s incentive is clear. The publisher’s incentive becomes: “How do I still get discovered if the click is no longer the default outcome?”

This is where trust gets weird. A publisher can do everything “right,” yet still be functionally invisible if the summary absorbs the demand.

If you want the broader trust angle (beyond search), connect this section to Why verified no longer means trusted online

External reference: EU DSA transparency reporting for large platforms and search engines (platform accountability pressures are rising)

“If This Is True, Then We Should See…” (Testable Signals)

- More “answer-first” interfaces branded as modes, not features (expect more rollout language like AI Mode rather than “snippet enhancements”).

- More public measurement work showing click changes when summaries appear (watch for follow-ups like Pew Data Labs style instrumentation, not platform PR dashboards).

- More pressure for transparency reporting and audits as search becomes a major information intermediary, especially in the EU.

- More publisher moves toward “AI-proof value”: tools, communities, data, and experiences that can’t be summarized cleanly into a paragraph.

- More emphasis on provenance and evaluation standards (even outside search), because the same trust questions apply everywhere AI summarizes and asserts.

https://www.nist.gov/itl/ai-risk-management-framework/nist-ai-rmf-playbook

The Comparison That Actually Matters

This isn’t “old SEO vs new SEO.” It’s “click economy vs no-click economy.”

| Dimension | Traditional Search | AI Answer-First Search |

|---|---|---|

| User goal | Find a page to read | Get an answer immediately |

| Primary metric | Click-through rate | Satisfaction without leaving |

| Publisher value | Content is the destination | Content is training/context fuel + citation |

| Trust signal | Website brand + page quality | Summary quality + provenance/citations |

| Failure mode | Spammy rankings | Confident wrong summaries + silent non-clicks |

If you want the accountability angle, connect this to Who audits the algorithms

The Decision Checklist

“A practical checklist for evaluating AI-answer search claims”

- Ask whether the system shows citations that a normal reader can verify, not just vague “sources.”

- Check whether citations point to primary documents (laws, standards, original research) versus summaries of summaries.

- Look for reproducibility signals: can the system explain why it answered that way, and what it used?

- Compare answers across multiple systems (platform diversity is a basic sanity check when outputs can differ).

- Watch for category risk: medical, legal, elections, finance, and safety questions should trigger higher scrutiny, even when summaries look polished.

- Demand governance language: model updates, evaluation, and documentation (this is where serious operators differ from hype).

- If you publish content: track impressions vs clicks separately so you can see “citation without traffic” clearly.

External reference (governance baseline): NIST AI RMF Playbook

Internal reference to pair with this section: Who audits the algorithms

The “Minimum Responsible Standard”

Minimum Responsible Standard — AI Answers in Search

Requirement 1: Show citations to verifiable sources.

Requirement 2: Make uncertainty visible when the evidence is mixed.

Requirement 3: Provide a way to inspect “why this answer” at a high level.

Disclosure: Label AI summaries clearly and note when content is generated.

Auditability: Maintain evaluation and change logs for major ranking/answer shifts.