The “Cloud” is a Construction Project With a Utility Bill

The public hearing isn’t about AI. Not officially.

It’s about a “large load customer,” a new substation, and a rezoning request on the edge of town. Someone on the commission asks why the building needs to be that big. A resident asks about noise. Another asks the question that always lands hardest: “How much water will this use?”

Then the utility rep clears their throat and says the quiet part out loud: even if the developer has money, the grid still has physics. Interconnection studies take time. Transformers take time. Transmission upgrades take time.

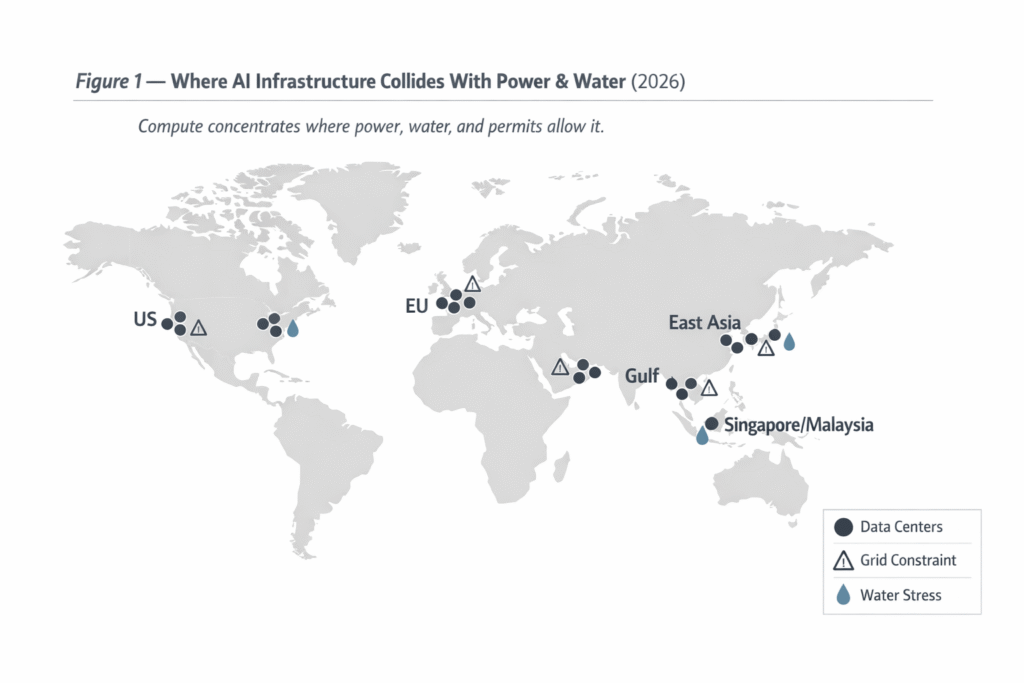

The cloud doesn’t arrive on a fiber line. It arrives on trucks. It arrives with concrete, copper, steel, pumps, and permits.

Follow the Money: Why AI Infrastructure Is Capital-Intensive by Design

When people argue about “AI scaling,” they usually argue about GPUs.

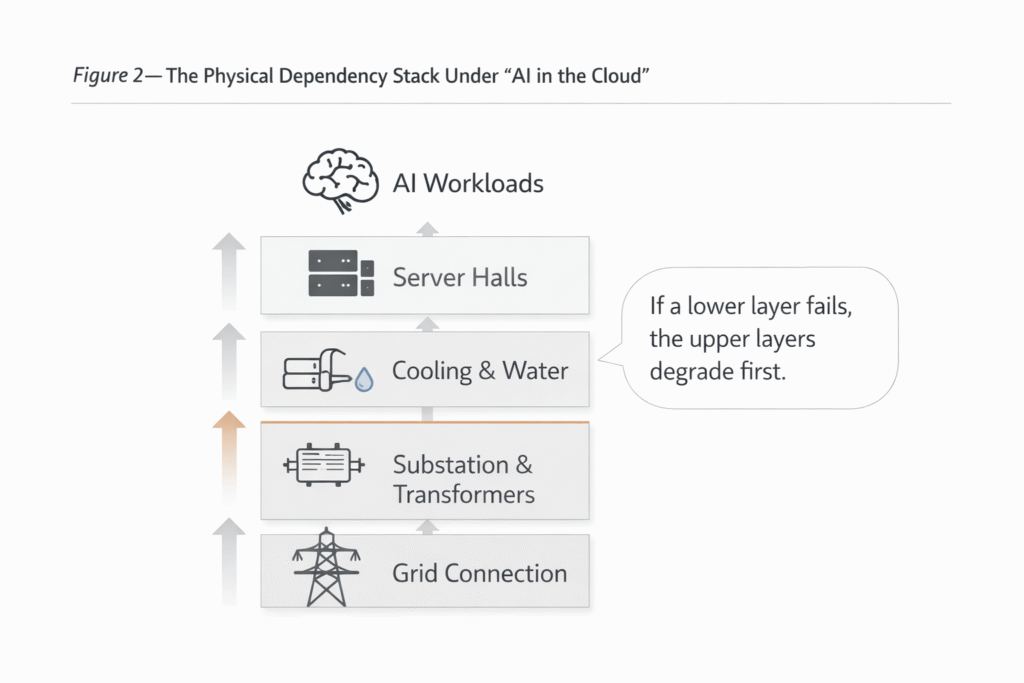

In practice, the bottleneck is often everything that has to be true for those GPUs to run at high utilization, 24/7, without embarrassing downtime. Data centers aren’t just server rooms anymore; hyperscale sites behave like industrial facilities, and the bill follows industrial rules.

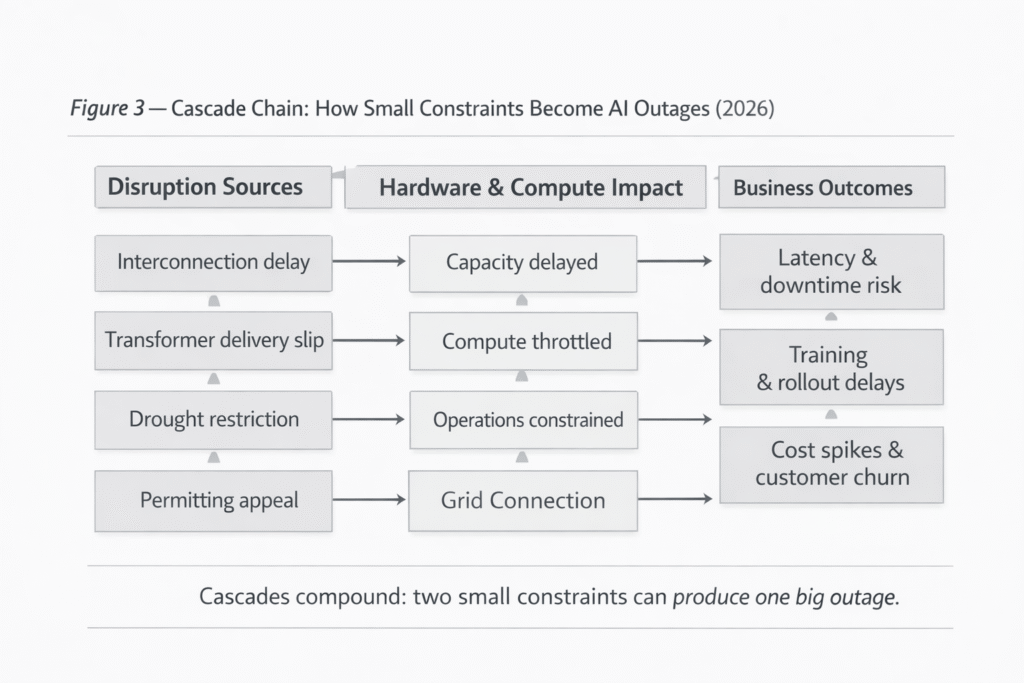

Here’s the part that gets missed: power delivery and reliability upgrades don’t scale linearly with your ambition. They step-function. You go from “we can connect you” to “we can connect you after a substation expansion and transmission work,” and suddenly your timeline becomes a negotiation with reality.

In the U.S., the grid side of the story is increasingly explicit. Federal regulators have been pushing major grid operators to create clearer rules for serving AI-driven large loads because the current process strains reliability planning and customer cost allocation (FERC fact sheet: https://www.ferc.gov/news-events/news/fact-sheet-ferc-directs-nations-largest-grid-operator-create-new-rules-embrace).

Cost Map: Where the Bill Actually Lands

If I’m doing a quick “sanity check” on an AI infrastructure plan, I look at five buckets that tend to blow up budgets and timelines:

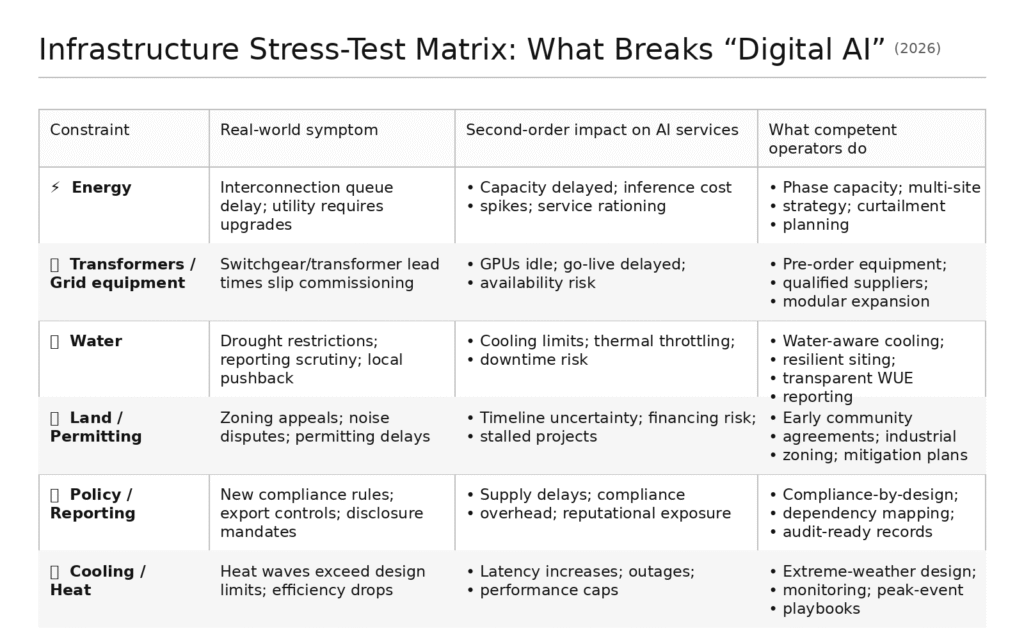

1. Power delivery (substation + transmission upgrades). The physical grid is not a vending machine. Long-lead equipment is a known choke point. DOE is blunt about large power transformers being expensive, customized, and slow to replace (DOE PDF: https://www.energy.gov/sites/default/files/2024-10/EXEC-2022-001242%20-%20Large%20Power%20Transformer%20Resilience%20Report%20signed%20by%20Secretary%20Granholm%20on%207-10-24.pdf). And when people in the industry talk about “transformer shortages,” they’re not being dramatic—lead times have stretched far beyond what normal project planning assumes (APPA summary of the national report: https://www.publicpower.org/periodical/article/national-council-publishes-report-addressing-power-transformer-shortage).

2. Cooling and water handling. Even “air-cooled” strategies still collide with water politics indirectly (through power generation and local water systems) and directly (through cooling loops). Water is a permitting and reputational constraint, not a minor utility input.

3. Building + fit-out. AI-friendly density raises heat rejection needs, redundancy expectations, and mechanical complexity. That changes the build, the MEP scope, and the commissioning risk.

4. Network backbone + fiber. If your site is far from backbone routes, you pay in time and trenching. “Cheap land” becomes expensive land quickly.

5. Redundancy (N+1 / 2N design). Reliability isn’t free. It is purchased—through extra electrical paths, backup systems, operational overhead, and, often, political negotiation about onsite generation.

For scale context: the International Energy Agency expects global data center electricity use to rise sharply this decade, with AI as a meaningful driver (IEA analysis: https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai). That’s not a tech story. It’s an infrastructure story.

Energy Isn’t Just a Utility Input. It’s the Throttle.

AI workloads don’t behave like office load. They behave like a high, steady industrial draw. That matters because it hits both capacity (can the grid deliver the megawatts?) and shape (how constant is the demand across day/night and seasons?).

Load shape is the part tech narratives skip.

A steady, high “load factor” can look attractive in a spreadsheet, but it compresses the margin utilities rely on to manage peaks, outages, and weather volatility. A widely cited industry analysis notes that data centers tend to operate at very high load factors and highlights examples like Dominion Virginia reporting an 82% load factor for large data centers in 2024 (Grid Strategies PDF: https://gridstrategiesllc.com/wp-content/uploads/Grid-Strategies-National-Load-Growth-Report-2025.pdf). When that pattern scales across regions, “interconnection” stops being a paperwork step and starts being a strategic constraint.

The IEA frames this tension clearly: electricity systems are being reshaped by electrification and new large loads—including data centers—at the same time reliability expectations keep rising (IEA report page: https://www.iea.org/reports/electricity-2024).

The Reliability Tax

Reliability is a policy debate disguised as an engineering choice.

If you build for “always-on,” you’re implicitly deciding who pays when the grid is strained. Backup generation, battery systems, multiple feeds—these reduce your risk, but they can increase system complexity and local scrutiny. A community that was neutral about “a warehouse-looking building” becomes much less neutral when they learn it comes with fuel storage, generators, and round-the-clock load.

And when the queue is long, reliability becomes a competition. Not just for megawatts, but for the equipment needed to safely deliver those megawatts—transformers, switchgear, protective relays, and the skilled labor to install them. DOE treats transformer resilience as a national issue for a reason (DOE PDF: https://www.energy.gov/sites/default/files/2024-10/EXEC-2022-001242%20-%20Large%20Power%20Transformer%20Resilience%20Report%20signed%20by%20Secretary%20Granholm%20on%207-10-24.pdf).

If your organization treats “power” as a line item instead of a strategic dependency, you’re not running AI. You’re renting it from the future.

Water: Cooling Becomes Politics

Most tech teams underestimate water because they think in compute terms, not civic terms.

Water has a different kind of fragility: it’s local, seasonal, and governed by community tolerance. When scarcity rises, the question is never “is water technically available?” The question is “who gets it, and who explains it?”

The evidence base is getting harder to ignore. OECD has pointed out that AI’s water footprint varies widely by location and cooling approach, and the public has limited visibility into how usage is measured and disclosed (OECD.AI analysis: https://oecd.ai/en/wonk/how-much-water-does-ai-consume). Brookings makes a similar point from a U.S. policy angle: the friction often comes from governance and transparency as much as from engineering (Brookings: https://www.brookings.edu/articles/ai-data-centers-and-water/).

Regulation is also tightening. The EU’s Energy Efficiency Directive includes obligations around monitoring and reporting for data centers—part of a broader push toward transparency on performance and footprint (EU Commission overview: https://energy.ec.europa.eu/topics/energy-efficiency/energy-efficiency-targets-directive-and-rules/energy-efficiency-directive_en).

If you want the AIChronicle lens on why this becomes a hard constraint (not a sidebar), read why AI infrastructure depends on water (https://theaichronicle.org/why-ai-infrastructure-depends-on-water/).

Land: The Quiet Constraint Nobody Models Well

Land is where “digital” stories go to die.

Because land isn’t one variable. It’s zoning, setbacks, floodplains, noise limits, transmission access, fiber access, truck access, tax agreements, and the unglamorous human fact that communities don’t like surprises.

The land footprint isn’t just the building. It’s the right-of-way. It’s the substation. It’s the cooling gear. It’s the security perimeter. It’s the buffer your lawyer asks for after the first complaint.

And “cheap land” is a trap phrase. The real question is: buildable land with power and fiber.

What data-center proposals routinely underestimate

How long interconnection studies and upgrades take once the utility starts treating the project as a system-level planning issue (useful regulatory context: https://www.ferc.gov/news-events/news/fact-sheet-ferc-directs-nations-largest-grid-operator-create-new-rules-embrace)

Transformer and switchgear lead times during periods of grid equipment scarcity (industry summary: https://www.publicpower.org/periodical/article/national-council-publishes-report-addressing-power-transformer-shortage)

Permitting “latency”: hearings, appeals, noise studies, and community concessions that don’t show up in engineering Gantt charts

Water constraints that change mid-project due to drought rules or municipal supply priorities (policy context: https://energy.ec.europa.eu/topics/energy-efficiency/energy-efficiency-targets-directive-and-rules/energy-efficiency-directive_en)

The hidden footprint of redundancy (extra equipment, extra space, extra operational overhead)

The PR and governance cost of being perceived as competing with residents for local resources (discussion: https://www.brookings.edu/articles/ai-data-centers-and-water/)

The reality that “data center jobs” arguments land differently than “data center load” arguments

The Infrastructure Stress Test: 6 Failure Modes That Break “Digital AI”

If you want a practical way to think about this, stop thinking like a product team and start thinking like an operator.

The infrastructure world runs on failure modes.

Stress-Test Matrix

Contrarian Take: “Efficiency” is Becoming a Comfort Story

I hear the same reassurance constantly: “Models are getting more efficient. Chips are improving. This will level out.”

Maybe. But “efficiency” is increasingly used as emotional anesthesia.

Two reasons.

First, rebound effects are real. When inference gets cheaper, demand doesn’t politely hold still. It expands into new product surfaces, new workflows, and new expectations. That’s why the IEA treats AI and data centers as a meaningful driver of electricity planning pressure, not a side note (IEA: https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai).

Second, constraints migrate. You don’t eliminate bottlenecks—you trade them. The story shifts from “compute availability” to “where can we build,” “where can we power,” and “where can we cool without social blowback.” The “digital” layer becomes increasingly downstream of civic and industrial limits (IEA electricity planning context: https://www.iea.org/reports/electricity-2024).

Efficiency helps. It does not automatically make the physical world irrelevant.

Real-World Context: Who’s Actually Paying for This?

This is where the debate gets uncomfortable, because the cost isn’t paid in one place.

Hyperscalers pay for land, construction, and a portion of grid upgrades. Utilities socialize some costs. Regulators attempt to draw lines. Communities pay in opportunity cost: land use, water stress, infrastructure prioritization, and occasionally higher rates if cost allocation is mishandled.

That tension is why governance frameworks matter even for “infrastructure” conversations. Risk isn’t only model behavior; it’s dependency risk. If you want a governance lens that translates well across sectors, NIST’s AI RMF is one of the few broadly usable references for thinking about risk ownership and transparency (NIST PDF: https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf).

If you want the upstream geopolitics connection—because infrastructure and supply chains are fused now—see Who Controls the Chips Controls the Future (https://theaichronicle.org/who-controls-the-chips-controls-the-future-ai-and-semiconductor-power/).

For the energy-and-emissions side of the same reality, connect this to the environmental cost of training large AI models (https://theaichronicle.org/the-environmental-cost-of-training-large-ai-models/).

The Analyst’s Verdict: 2027–2028

By 2027, the “infrastructure transparency” era stops being optional in more jurisdictions. The EU’s direction on data center monitoring and reporting is a preview: governments will demand clearer disclosure of energy performance and water footprint because planners can’t manage what they can’t see (EU Commission overview: https://energy.ec.europa.eu/topics/energy-efficiency/energy-efficiency-targets-directive-and-rules/energy-efficiency-directive_en).

By 2028, I expect at least one major AI service outage story to be traced primarily to an upstream physical constraint—grid equipment lead times, interconnection limits, or water restrictions—not a “bug” and not a cyberattack. That’s testable: watch public incident reporting, utility filings, and whether “capacity throttling” becomes a routine disclosure in infrastructure-heavy regions.

The takeaway is simple, even if the fix isn’t: the most advanced AI in the world still runs on land, water, and electrons. Ignore that, and you don’t get a smarter future. You get an intermittently available one.

By Sami Hayes – AIchronicle Insights

Pingback: Who Controls the Chips Controls the Future: AI and Semiconductor Power - AIchronicle